Local Differential Privacy for IoV

文章目录

A survey of local differential privacy for securing internet of vehicles

Intro

IoV facilitates human’s life but benefits come with huge price of data privacy.

In academia, differential privacy (DP) is proposed and regarded as an extremely strong privacy standard, which formalizes both the degree of privacy preservation and data utility.

But DP suffers from a drawback in many practical scenarios because the paradigm of DP relies on a third party.

LDP has the potential to guarantee the data privacy in IoV, the third party does not collect the exact data of each individual, and yet still be able to compute the correct statistical results.

Contributions of this paper:

- first to survey existing work about LDP in terms of advantages, disadvantages, computation complexity, and the privacy budget

- first to investigate the potential applications of LDP in securing IoV and highlight the new challenges

LDP researches

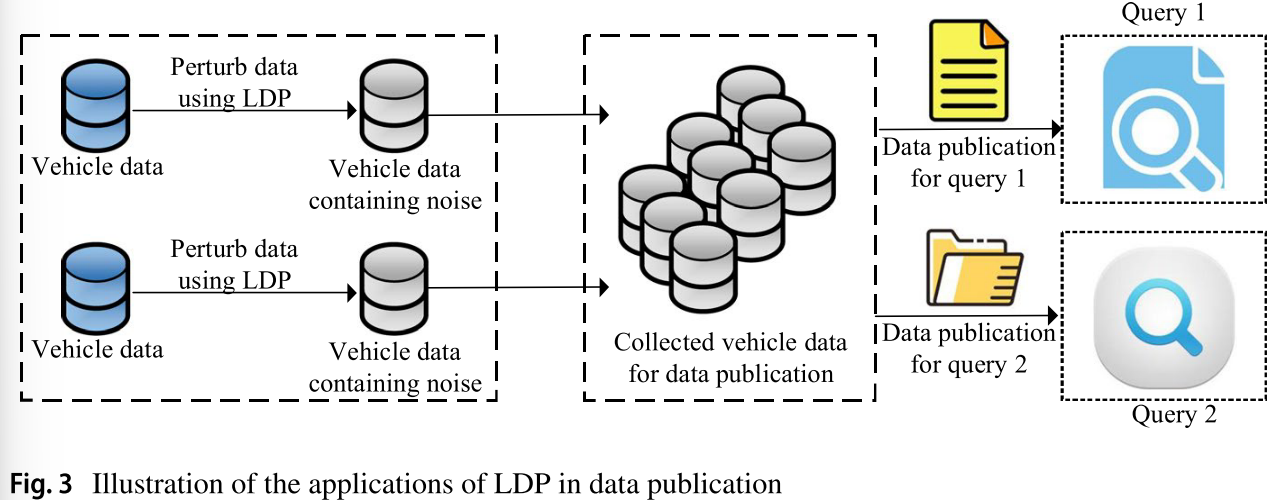

Data publication

Data publication based on local differential privacy mainly uses non-interactive framework to release statistical information of sensitive data and enables the released data to meet the needs of data analysis at the same time. Commonly used data publication technologies mainly include histogram, partitioning, and sampling filter.

The earliest research RAPPOR proposed to collect crowd-sourced statistics from users’ data without violating users’ data privacy, but it has two disadvantages:

- high communication cost between users and the data collector

- data collector is required to collect candidate string lists in advance for frequency statistics

To reduce the communication overhead, S-Hist proposed to randomly select bits using randomized response techniques to perturb users’ data and send the perturbed data to the collector.

To deal with the second drawback of RAPPOR, the second drawback of RAPPOR, O-RAPPOR based on the unknown values of variables and designed hash mapping and grouping operations.

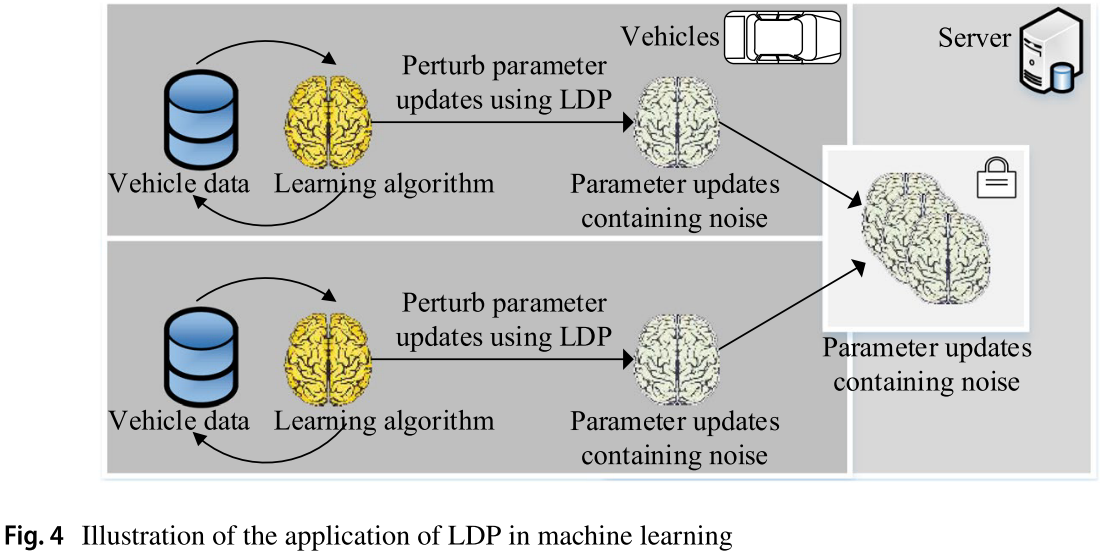

Machine Learning

The main idea of LDP-based machine learning is that users locally perturb parameter updates of machine learning on their vehicle data using LDP and the server gets the global parameter updates via collecting local parameter updates from users.

Regression analysis based on LDP is another research topic. Regression analysisis a commonly used data classification method in machine learning, which determines the quantitative relationship of two or more attributes in the input datasets. Regression analysis consists of two kinds of functions: One is the prediction function; the other is the objective function, or the risk function. It will leak the prediction function and the data information in the datasets when publishing the weight vector. To protect such data privacy in machine learning, a variety of work has applied LDP to regression analysis.

Whether add noise to weight vector or objective function, the cost of calculating the sensitivity of the weight vector is still high.

To address the problems above, FM (functional mechanism) was proposed, which achieved the regression analysis while meeting local differential privacy. FM first perturbed the sum of objective functions corresponding to each data tuple in the datasets and then obtained the weight vector by minimizing the target function. In this process, the noise scale is not determined by the sensitivity of the weight vector, but by expressing the objective function as a polynomial, avoiding the computation of the sensitivity of the weight vector.

In summary, the SVM classification based on Laplace has low classification accuracy and larger noise, while SVM classification based on perturbing objective functions is only applicable to specific objective functions. Therefore, how to design a perturbation mechanism with high precision and applications to multiple objective functions is the future research topic.

Query processing

The query processing technologies based on local differential privacy mainly focus on how to respond to queries with less privacy budget and lower error.

For example, linear queries in the interactive framework, batch processing of linear queries includes matrix mechanisms and low-rank mechanisms.

Another kind of work focused on process users’ queries based on division methods.

In summary, the matrix mechanism is prone to suboptimal results in practice; the low-rank mechanism only considers the correlation of the load matrix and does not take into account the relevance of the data itself. Therefore, how to design a general batch query processing mechanism from the actual relevance of the data itself is a future research direction.

Application of LDP system

Frank et al. first introduced the local differential privacy method to the recommendation system. They assumed that the recommendation system is not trusted. The attacker can estimate the user’s private information by analyzing the historical data of the recommendation system. So it is necessary to interfere with the input of the recommended system. When analyzing the relationship between projects, they first establish a project similarity covariance matrix and add Laplace noise to the matrix to implement interference, and then submit it to the recommendation system to implement the conventional recommendation algorithm.

Applications of LDP in IoV

We first introduce the attack models in IoV and then investigate the potential applications of LDP in several typical scenarios in IoV.

Attack model in IoV

-

Malicious vehicle. On the one hand, they may deliberately disclose vehicles’ data to advertisers, illegal organizations. On the other hand, they may collude with others, send poisoning data, deliberately drop out during the process of completing IoV services, etc., aiming to disclosing other users’ data privacy or paralyzing the IoV systems.

-

Malicious server. Specifically, malicious server may be passive or active adversary. The passive adversary is curious about users’ data, but it honestly performs the protocols. In contrast, the active adversary may tamper the protocols or actively launch attacks to disclose users’ data privacy.

Query services in IoV

A number of techniques have been proposed to protect users’ data privacy in academia.

Specifically, rule protocol-based privacy-preserving techniques are first proposed. However, it is high overhead for server to obtain vehicles’ authorization before utilizing vehicles’ data in IoV, because of the unique features vehicles exhibit, e.g., high mobility, short connection times, etc.

Encryption techniques allow the server to collect and process the encrypted data of vehicles. Nevertheless, it is not applicable to large amounts of vehicles’ data due to the extremely high computation overhead.

Heuristic algorithms, e.g., dummy, k-anonymity, pseudonym, cloaking, m-invariance, etc., are quite lightweight compared to encryption techniques. But all these heuristic algorithms are vulnerable to the side information-based attacks.

In summary, LDP that is relatively lightweight and thoroughly considers side information of attackers is promised to protect vehicles’ data privacy in query services in IoV.

Crowdsourcing in IoV

Many existing work has focused on protecting data privacy in crowdsourcing. Work [114] introduces a third party, the cloud, that is responsible for storage and computation burden. Study [115] proposed a general feedback-based k-anonymity scheme to cloak users’ data. The literature [116] utilized a random perturbation to mask users’ data and employed the error-correcting codes to guarantee data utility. However, all these existing work ignores the side information of attackers and therefore is susceptible to side information-based attacks. Furthermore, these work is not applicable to the data privacy preservation in IoV, as density of vehicles is varying, and vehicles move with high speed and can only be connected in short time.

In such a case, LDP is applicable to protect vehicle users’ data privacy in crowdsourcing applications in IoV, as it thoroughly considers the available side information of attackers and is a lightweight privacy-preserving method.

Future research opportunities

In IoV, the complexity, diversity, and large-scale nature of vehicle data will add new data privacy risks. Therefore, we believe that LDP will face many new challenges:

- LDP for complex data types in IoV. At present, the research of LDP mainly focused on simple data types, e.g., frequency statistics or mean value statistics on set-valued data that only contains one attribute. However, in IoV, the structural characteristics of vehicle data make the global query sensitivity extremely high and bring in excessive noise.

- LDP for various query and analysis tasks in IoV. At present, the existing work about LDP only investigated the privacy preservation in the two types of simple aggregate queries, i.e., counting queries of the discrete data and mean queries of the continuous data. Furthermore, the way of data perturbation is generally depended on the types of queries. In IoV, a variety of query services are provided, and thus, LDP faces many new challenges.

- High-dimensional vehicle data publication based on LDP. In IoV, the set-valued data contains many attributes, and the existing studies about LDP will not work, as they only focused on simple data types.

- Improvements in the LDP model for IoV. In practice, the value of the privacy-preserving parameter 휖 still does not have a standard. Although the physical meaning of parameters in k-anonymity and l-diversity is intuitive, the privacy preservation provided by 휖-differential privacy is relatively vague, which indicates that the problem is still up in the air.

- Considering correlations among vehicle data with LDP. Local differential privacy assumes that vehicle data are independent of each other, i.e., ignoring the correlations among vehicle data. However, in practice, vehicle data may be dependent.

- Combinations the LDP model with other techniques, e.g., machine learning, AI, and so on. AI techniques are expected to provide potential solutions to, e.g., smart city, intelligent transportation, travel route recommendation, environment monitoring, air quality navigation, map navigation, etc., in IoV.

评论正在加载中...如果评论较长时间无法加载,你可以 搜索对应的 issue 或者 新建一个 issue 。